The Public vs. Private peering debate part is required because the Peering Coordinator Community points out that the next best alternative to 10G public peering is peeling off cross connects or circuits for private peering, so the paper compares these two options.

Definition: The Internet is a network of networks, interconnected in Peering and Transit relationships collectively referred to as a Global Internet Peering Ecosystem.

Definition: Internet Transit is the business relationship whereby one ISP provides (usually sells) access to all destinations in its routing table .

Figure - Transit Relationship - selling access to the entire Internet.

Internet Transit can be thought of as a pipe in the wall that says "Internet this way".

In the illustration above, the Cyan ISP purchases transit from the Orange Transit Provider (sometimes called the "Upstream ISP"), who announces to the Cyan ISP reachability to the entire Internet (shown as many colored networks to the right of the Transit Providers). At the same time, the Transit Provider propagates the Cyan routes across the Internet so that all attached networks know to send packets to the Cyan ISP through the Orange Transit Provider. In this way, all Internet attachments know how to reach the Cyan ISP, and the Cyan ISP knows how to get to all the Internet routes.

Some Characteristics of Internet Transit

Transit is a simple service from the customer perspective. All one needs to do is pay for the Internet Transit service and all traffic sent to the upstream ISP is delivered to the Internet. The transit provider charges on a metered basis, measured on a per-Megabit-per-second basis using the 95th percentile measurement method.

Transit provides a Customer-Supplier Relationship. Some content providers shared with the author that they prefer a transit service (paying customer) relationship with ISPs for business reasons as well. They argue that they will get better service with a paid relationship than with any free or bartered relationship. They believe that the threat of lost revenue is greater than the threat of terminating a peering arrangement if performance of the interconnection is inadequate.

Transit may have SLAs. Service Level Agreement and rigorous contracts with financial penalties for failure to meet service levels may feel comforting but are widely dismissed by the ISPs that we spoke with as merely insurance policies. The ISPs said that it is common practice to simply price the service higher with SLAs, with increased pricing proportional to the liklihood of their failure to meet these requirements. Then the customer has to notice, file for the SLA credits, and check to see that they are indeed applied. There are many ways for SLAs to simply increase margins for the ISPs without needing to improve the service.

Transit Commits and Discounts. Upstream ISPs often provide volume discounts based on negotiated commit levels. Thus, if you commit to 10Gigabits-per-second of transit per month, you will likely get a better unit price than if you commit to only 1gigabit-per-second of transit per month. However, you are on the hook for (at least) the commit level worth of transit regardless of how much traffic you send.

Transit is a commodity. There is debate within the community on the differences between transit from a low cost provider and the Internet transit service delivered from a higher priced provider. The higher price providers argure that they have better quality equipment (routers vs. switches)

Transit is a metered Service. The more you send or receive, the more you pay. (There are other models such as all-you-can-eat, flat monthly rate plans, unmetered with bandwidth caps, etc. but at the scale of networking where Internet Peering makes sense the Internet Transit services are metered.)

Definition: Internet Peering is the business relationship whereby companies reciprocally provide access to each others’ customers.

This definition applies equally to Internet Service Providers (ISPs), Content Distribution Networks (CDNs), and Large Scale Network Savvy Content Providers.

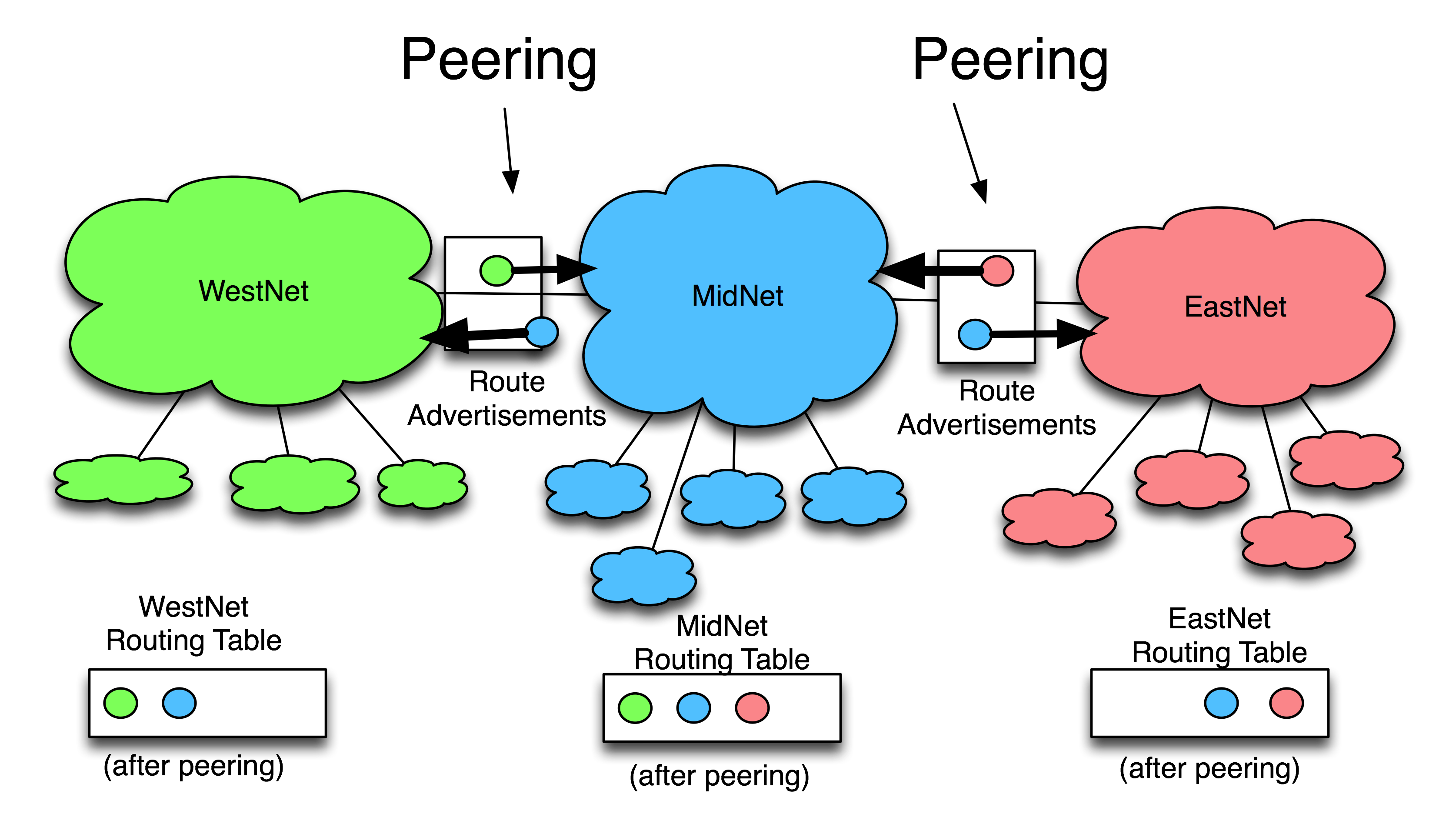

To illustrate peering, considerthe figure below showing a much simplified Internet; an Internet with only three ISPs: WestNet, MidNet, and EastNet.

WestNet is an ISP with green customers, MidNet is an ISP with blue customers, and EastNet is an ISP with red customers.

WestNet is in a Peering relationship with MidNet whereby WestNet learns how to reach MidNet's blue customers, and MidNet reciprocally learns how to reach WestNet's green customers.

EastNet is in a Peering relationship with MidNet whereby EastNet learns how to reach MidNet's blue customers, and MidNet reciprocally learns how to reach EastNet's red customers.

Peering is typically a free arrangement, with each side deriving about the same value from the reciprocally arrangement. If there is not equal value, sometime one party of the other pushes for a Paid Peering relationship.

Important as well is that peering is not a transitive relationship. WestNet peering with MidNet and EastNet peering with MidNet does not mean EastNet customers can reach WestNet customers. WestNet only knows how to get to blue and green customers, and EastNet knows how to reach only blue and red customers. The fact that they both peer with MidNet is inconsequential; peering is a non-transitive relationship.

Next we discuss the rationale given for public peering.

What is Public Peering?

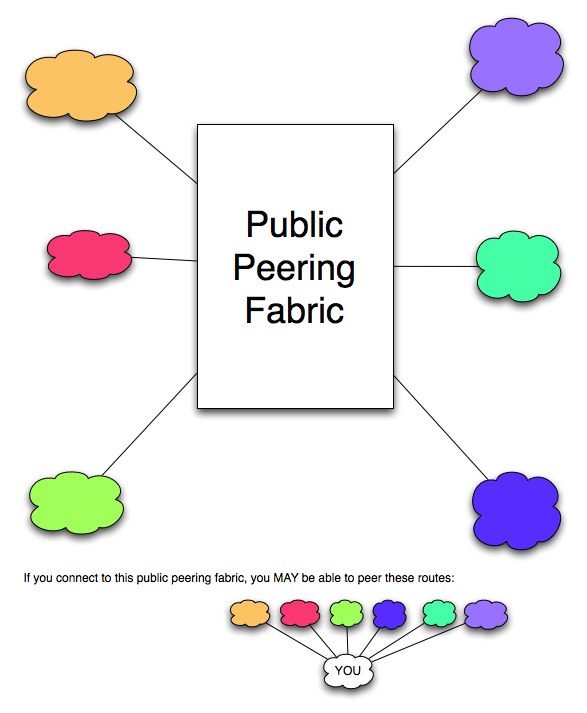

Definition: Public Peering is Internet Peering across a shared (more than two party) peering fabric.

Since Ethernet is the most common public peering fabric in the world, we will assume Ethernet is used for public peering from this point on in the paper. Public peers connect their router Ethernet interface card(s) to the public peering fabric and (potentially) peer with many of the other attached public peers.

Peers exchange access to each others customers for free, but what are the costs associated with Public Peering? If we assume that the peering is to be done by collocating equipment at an Internet Exchange Point (IX), public peering requires

1) a router with an a. interface card suitable to connect to the public peering fabric, b. interface card suitable to send and receive traffic back to the rest of the network,

2) a port on the public peering fabric,

3) colocation space at an Internet Exchange Point (the European IX Model is a bit different here).

Note that we are ignoring the cost of the circuit(s) into the Internet Exchange (IX) in this analysis for two reasons. First, for an ISP or Large Scale Network Savvy Content Provider, these costs would be the same for both public and private peering at the scales that we are discussing. Secondly, companies looking to peering towards 10G are probably also purchasing transit at the IX, selling transit at the IX, or otherwise establishing a presence at the IX for reasons beyond peering. If true, then the transport costs of peering will at least partially be covered by these other activities. And here again, any incremental costs will be incurred whether publicly or privately peering.

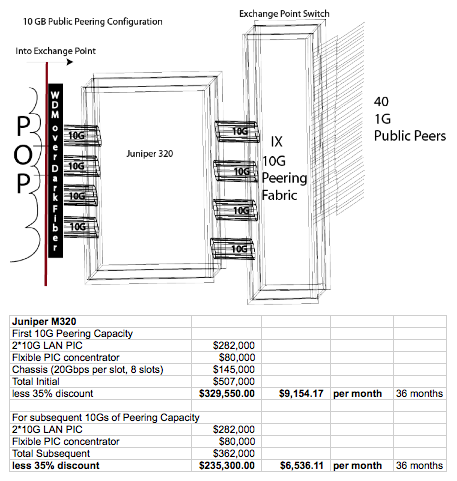

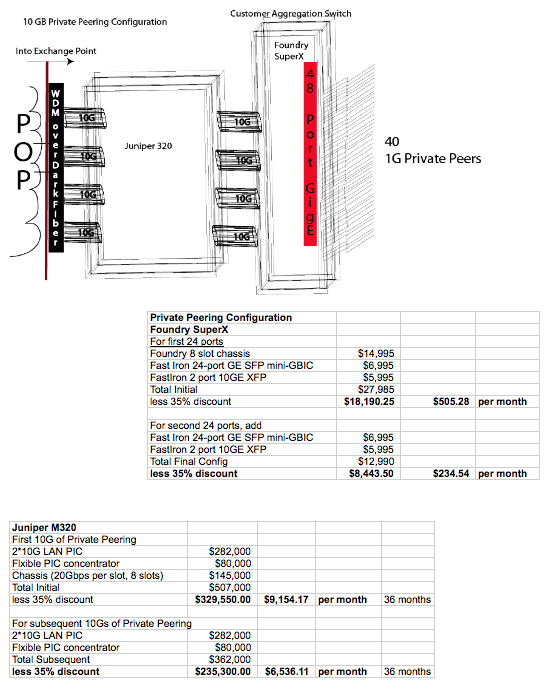

For both cases (10G public and 1G private peering) we will use the Juniper M320 router, with the pricing provided below. We will allocate costs incrementally; as we increase the number of peering sessions we will apply the costs of the corresponding interface cards as required, and we will amortize the cost of all peering equipment over 364 months. Note that we also assume the router vendor discounts the price of routers by 35%, which is typical in the industry.

Figure 2 - Public Peering Model Hardware for Publicly Peering with 40+ single gigabit peers

We assume that the ISP anticipates requiring 10G of peering capacity into the Exchange Point and therefore we will start out with 10G of ingress capacity into the IX. From a practical perspective, this will most likely be done by leasing dark fiber and running the 10GE to their metro Point of Presence (POP). We will assume that the ISP will allocate additional strands and/or install Wave Division Multiplexing (WDM) and other equipment needed to obtain additional 10G ingress capacity. Since this capacity is required regardless of the public vs. private peering method, these costs are a wash and not taken into account in this paper.

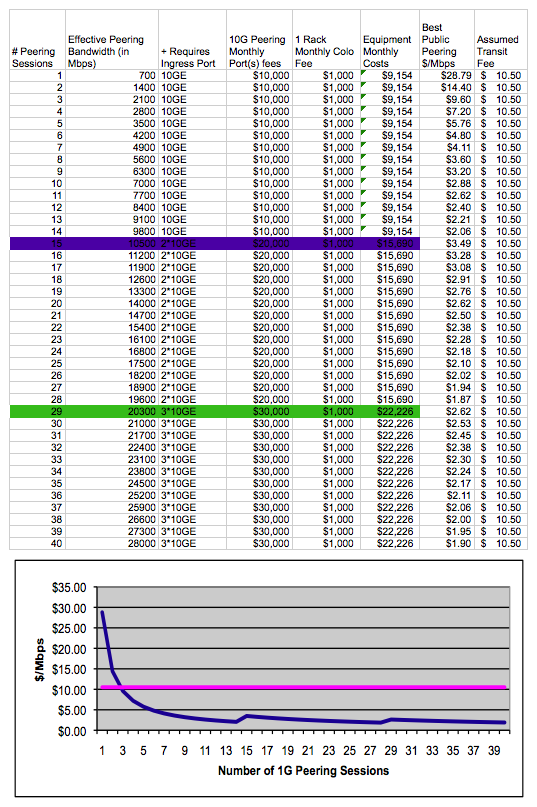

For modeling purposes we will assume that an ISP has an aggregation ratio of 1.5:1. One peer's peaks may match up with another peer's valleys when the traffic is aggregated out a single large pipe, providing "aggregation efficiencies." This 1.5:1 aggregation ratio means that when an ISP aggregates peering traffic to/from ten 1-Gigabit-Ethernet public peer's traffic, they can statistically multiplex up to 14 1-Gigabit-Ethernet peers' worth of peering capacity and still not need to upgrade the ingress or public peering port capacity. When the 15th peer is added though, the 10G peering port and the ingress 10G port will need to be upgraded and the corresponding costs are added to the model.

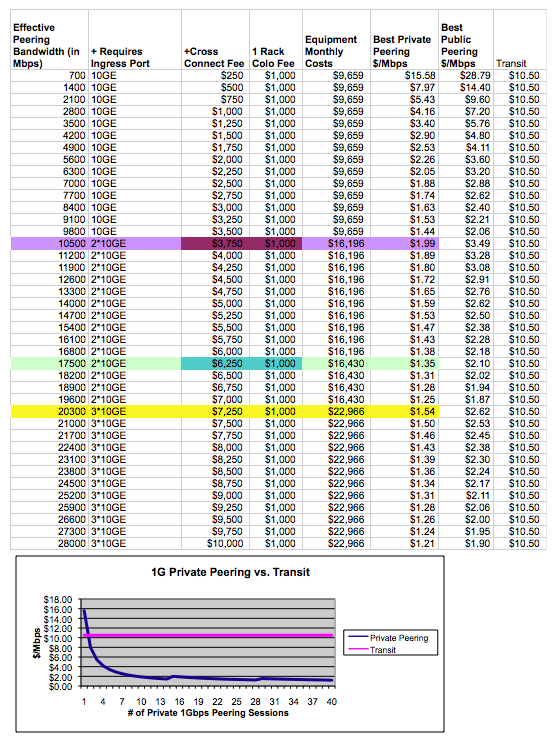

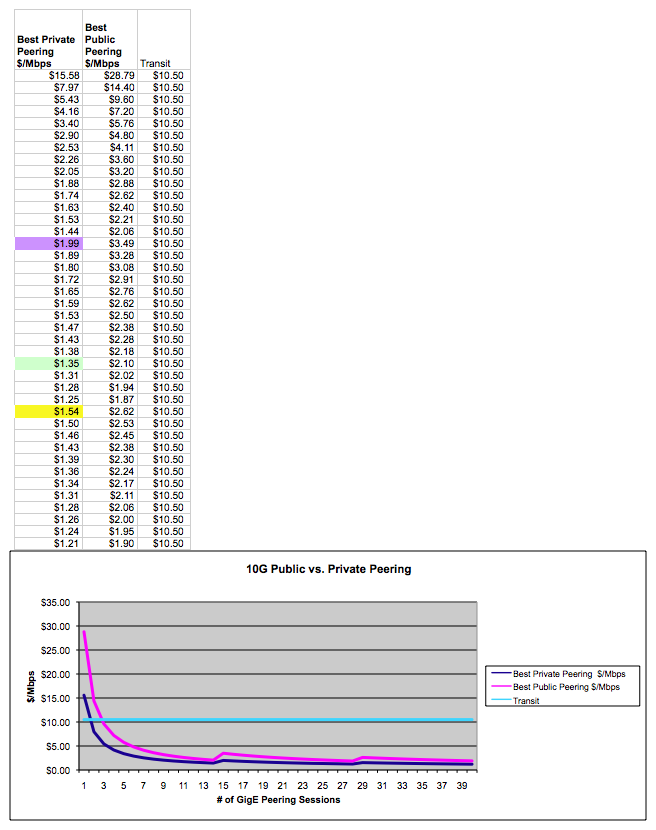

We are assuming for modeling purposes that the 10G peering port at the IX costs $10,000 per month.

We are assuming for modeling purposes that racks fees of $1,000 per month are charged at the IX. Some in Europe suggested that this cost was too high, others suggested that given the power requirements for the equipment described, this cost should be doubled. These Public Peering costs allocated across peering sessions is shown in the spreadsheet below. Note that the colored lines are highlighting the points where additional hardware is added to the peering configuration.

The last column "Best Public Peering" is a measure of the best cost of peering a 10G public peer can hope for, assuming that the peering session runs to the "Effective Peering Bandwidth", the maximum peak peering before the peering capacity should be upgraded for that peer. This is captured in the graph below which also highlights the incremental hardware additions required for peering at 10G for each additional peer.

Sources and Notes ...

Here is a sample configuration (see spreadsheet below) for privately peering up to 40 GE peers. For private peering we will add in the Foundry SuperX switch to the 320 Peering Router, and allocate each of two 24-port GE blades to the SuperX as needed. We will also add additional 10G ingress and 10G aggregate peering bandwidth into the equation as needed for the corresponding additional peering sessions.

Public and Private Graphics look similar from afar!

While the public and private pictures may seem similar, they are not. With public peering, the Exchange Point Operator runs the public peering switch on behalf of the IX participants. With Private Peering, the only people connected to the switch are those that peer with the ISP in question, and the ISP in question operates the switch. As you can see in the diagram above, there are no active network elements involved in peering except for those operated by the peers.

These Private Peering costs allocated across peering sessions is shown in the spreadsheet below. Note that the colored lines are highlighting the points where additional hardware is added to the peering configuration.

So now we have seen the configurations for both public and private peering, and they both make sense as the volume of traffic grows. Yet, there are strong arguments on each side for prefering one over the other. Let's explore the strongest arguments on each side next.

In the Peering Coordinator Community this issue has generated much debate. Let's explore the strongest arguments on each side of the debate, presented below.

a. A network can easily aggregate a large number of relatively small peering sessions across a single fixed-cost peering port, with zero incremental cost per peer. (Private peering requires additional cross connects and potentially an additional interface card, so there are real costs associated with each incremental peering session.) Small peering sessions often exhibit a high degree of variability in their traffic levels, making them perfect for aggregation. Since not all peers peak at the same time, multiple peers can be multiplexed onto the shared peering fabric, with one peer's peak traffic filling in the valleys of another peer's traffic. This helps make peering very cost effective: "I can't afford to dedicate a whole gigE card to private peering with this guy, but public peering is a no-brainer."

b. Public peering ports usually have very large gradations of bandwidth: 100Mbps Ethernet upgrades to 1Gbps Ethernet, which upgrades to 10Gbps Ethernet. With such large gradations, it is easier for smaller peers to maintain several times more capacity via public peering than they are currently using, which reduces the likelihood of congestion due to shifting traffic patterns, bursty traffic, or uncontrolled Denial of Service attacks. "Some peers aren't as responsive to upgrading their peering infrastructure, nor are they of similar mind with respect for the desire for peering bandwidth headroom ." The large gradations of public peering bandwidth help reconcile these two issues.

a. Public peering is the easiest and fastest way to both turn up and turn down a peering sessions, since no physical work is required. Peering is soft configured by the two parties on the router and the peering session is up.

b. It is common for a network to set up a trial peering session to determine the amount of traffic that would be exchanged should a session be turned up. If there is public peering capacity available, there is no incremental cost or extra administrative work required to turn up a trial peer, and the information gathered may prevent choosing an incorrect private peering port size if the traffic is moved to a private peer later.

c. Many Peering Coordinators must work within a budget, and do not have decision making authority for purchases within their company. Once the public peering switch port is ordered, there is no additional cost and therefore no additional hurdle to peering for the Peering Coordinator.

d. Public Peering provides financial predictability. The hardware requirements and monthly recurring costs of peering are the same every month . This makes planning and budgeting much easier.

e. 10G Public Peering scales large peering sessions (those greater than 1Gbps) seamlessly, while private peering beyond gigE capacities requires private peering at 10G (very expensive), or connecting multiple gigEs together, which can be tricky .

a. Corporate and Enterprise customers continue to ask to see the list of the ISP's public peering points .

a. Across Europe, where public peering across multiple collocation centers is the norm, private peering is often a much more expensive solution. Purchasing private peering circuits within a metro is potentially very expensive, while the same traffic can traverse a shared peering fabric for much less.

Here are the strongest argument private peering advocates shared with the author.

a. SNMP Counters can be easily collected on each peering port to monitor the utilization of the Peering Session resources. No time intensive Netflow or expensive network analysis software is required to sort through shared peering fabric data to determine per-peering-session traffic volume.

b. Greater Visibility: No Blind Oversubscription Problem. With public peering, the remote peer could be congesting his port with the other peering sessions and you have no visibility into their public peering port utilization. Packets could be dropped due to port oversubscription resulting in poor peering performance. Since Private Peering involves only the two parties, when the port reaches an agreed upon utilization (say 60% utilization for example), both parties can see that it is time to upgrade the peering session.

a. If an expected peering port and cross connect costs were $400 per month and the parties expected to send 40Mbps to each other, the EPPR would be $400/40Mbps=$10/Mbps, a very attractive price in today's transit market. b. For those who exchange traffic with a few large peers, the 80%/20% rule applies; the majority of peering benefits can be derived by peering with the 20% of potential peers that deliver 80% of your traffic. This suggests fewer larger peers is preferable over picking up lots of small peers across a public peering fabric.

a. Private Peering involves fewer network components that could break. It should be noted that this argument weakens when the "private" peering are provisioned across VLANs, though optical interconnects, telco provisioned SONET services, or other active electronics.

b. An architecture of private peering removes the variability of support processes across IXes . Across Europe, each IX is different, and a NOC Operator may need to understand the processes, the levels of support and debugging capabilities of the switch support staff on call at the IX, and may even need to craft NOC scripts to navigate through the IX operations tasks. A private peering architecture provides consistency that helps the NOC debug and fix things more rapidly.

c. The greater fear is that layer 2 fabrics could be connected through other layer two fabrics perhaps without the knowledge or consent of the peer, resulting in a very difficult debugging and diagnostics situation if a peering failure occurs.

a. A private peering network that is directly connected only with those with whom there is an explicit peering arrangement is more secure than a network that connects to a public peering fabric that includes participants with whom there is no relationship with the company. There is some history here; early exchange points were places where "traffic stealing" was accomplished by pointing default at an unsuspecting and poorly secured public peer. Other problems included peers tunneling traffic across the ocean across a peer's network. These things are explicitly disallowed in most peering and IX terms and conditions and can be further secured through filtering, but are still seen as potential hazards minimized by privately peering.

b. An architecture that solely privately peers is less likely to be compromised. Since fiber has no active components that can be administered, there is nothing that can be broken into. With a switch or other active electronics in between peers, there is the possibility that traffic can be captured at the peering point without their detection. It is relatively easy to mirror a public peering port as compared with tapping into private peering fiber cross connects without the detection of the peers involved. A few ISPs pointed to technology that can passively tap into fiber interconnects, which if true, would decrease the strength of this argument.

5. Private Peering Inclination Signals a More Attractive Peer.

a. The "Big Players" privately peer with each other and some even loath Public Peering Fabrics for historical reasons. Adopting this attitude puts one in the company of the largest Tier 1 ISPs in the world. "For certain very large networks, public peering makes no sense at all. For certain very small networks, public peering may make perfect sense ." Or put more harshly, "if you think that public peering is a good idea, you're just not large enough yet ."

A combination of public and private peering is the most common, where ISPs peer publicly and "peel off" peering sessions to private peering as the volume of traffic to and from those peers increases. The "40% rule" is sometimes used whereby both parties contractually obligate each other to migrate their public peering session to private peering sessions when either party reaches 40% aggregate usage of their public peering port. This provides a safeguard for those concerned about the "Blind Oversubscription Problem" described above . One ISP primarily uses private peering but does maintain public peering for reserve and emergency interconnect capacity. The ability to scale public peering quickly and seamlessly was seen as a key attribute here. History has shown that traffic generally grows, incrementally or sporadically from emergencies and spot events. At a recent NANOG Peering BOF Peering Debate, Vijay Gill (AOL) pointed out that there may be a "life cycle" of peering inclinations (see Appendix B), where peers migrate from public towards private peering as the scale increases. There is much debate regarding the virtues of de-peering, and of pulling away from public peering.

Let's compare the costs of these two configurations: Public Peering using 10G Ethernet against Private Peering using many 1G Ethernet Private Peering Sessions. In the spreadsheet and graphic below you will see the costs of publicly peering lots of aggregate traffic over large 10 Gigabit-per-second public peering port(s) compared against attaching a switch and privately peering lots of private peering sessions directly to peer routers.

a. The first thing we notice is the similarity of the graphs. Both Private and Public peering routers used in this example are large-capacity, high-performance, and expensive, especially if one only peers with one other party at sub-1Gbps levels. At one peer each, both assuming a fully utilized gigE peering session (at 700Mbps), the cost of traffic exchange is between $12/Mbps and $26/Mbps. These are not bad prices compared against the transit market today.

b. Next, we notice that both configurations require a 10G ingress card and 10G peering card, and we also notice that Private Peering requires a second piece of hardware, a comparably inexpensive switch. The steps of the cost functions are highlighted in the graph, but with an extra piece of hardware, why is Private Peering consistently less expensive than Public Peering? The answer is, the IX charges only $250 per month for the cross connect as opposed to $10,000 per month for each incremental 10G peering port. Note that in Europe, the price of a 10G peering port is less than half this price.

c. In the case of public peering, we upgrade the ingress and peering capacity when we reach our 15th peering session, and are set to continue peering until our 29th peering session, explaining the to bumps in the cost graphs.

d. For private peering we upgrade when we allocate our 15th peering session, again assuming the aggregated traffic can fit over the 10G ingress. After that point we allocate a second 10G ingress interface and are all set until we run out of private peering links. For our 25th peer, we deploy a second 24 port Ethernet card. Each of these steps is highlighted in the graphs and spreadsheets.

In both Public and Private Peering scenarios we see a very compelling case for peering at 10G. The cost of peering quickly (for even a few peering session) dips below $10 per Mbps, well below the market price for purchasing transit.

Discussions with Patrick Gilmore (Akamai) and Richard Steenbergen (nLayer) highlighted two transition dynamics that have occurred during the past transitions of peering capacity (10M→100M IX Peering Port Size, and then 100M→1G Peering Port Size).

1) When an ISP transitions to the next size up, he will try and encourage his larger peers to transition as well so they both realize the benefits of economies of scale as shown in the graphs above. However, at this point, the peer may respond with sticker shock over the cost of the 10G hardware and, if in the same building, counter propose private peering using dedicated gigE peering ports.

i. This erodes the network externality benefits for the community as less traffic results in less benefits for the peering community.

ii. This is only possible if the ISPs are in the same building and cross connects are reasonable inexpensive.

2) When an ISP starts to fully utilize private peering capacity there is a transition step that involves

i. load sharing across a pair of cross connects which is difficult to manage,

ii. having both sides upgrade routers or at least interface cards or ports, which can be expensive, or

iii. migrate the private peering back to a larger public peering port, which can be very expensive. In these cases, the Peering Coordinators face a transition challenge, and try to manage expectations internally and externally surrounding the timing and cost expected for the peering transitions.

Transport Costs into the IX are ignored.

We have, in some white papers in the past, included the cost of the local loop into the IX in the analysis. The assumption then was that the company investigating peering was already installed somewhere else with a transit provider in place. By including local loop in the analysis we compared the current situation against extending a presence at an IX only for peering. Conversations with Peering Coordinators revealed a flaw in previous research papers:

1. Those entering an IX to establish a peering presence, also have access to (potentially many) transit providers and can aggregate peering and transit traffic over a single large capacity transport circuit. In this case, the other transit location is not needed.

2. In the case where both the transit and the peering locations are needed, a local loop is required. For the majority of those participating in 10G peering, the opportunity to sell transit is enough to warrant installation into the IX. In this case, the cost of local loop is included in the cost of selling transit, so should not be a cost allocated solely to peer.

Having said this, it is worth pointing out that there are precious few options for transport into an IX for 10G peering. Most reasonable and widely deployed are 10G peering off an existing multi-OC-192 backbone deployment and 10G directly run over fiber potentially needing long reach optics. When factoring the cost of the transport we see almost no difference between peering privately and publicly – the price differences are negligible compared to the monthly recurring costs of transport .

We assume the Peering Equipment as described is capable of handling the peering load and is configurable to the needs of the peer.

We assume that peers publicly peering will continue to publicly peer at 10G levels. One ISP pointed out that each of these steps in peering capacity (10M to 100M peering, 100M to 1G peering, and now 1G to 10G peering) did not always lead to both parties upgrading their public peering capacity. When one party requested the other party to upgrade their public peering capacity with them, the upgrade request would require a capital expense that could not be made at that time. In this case, the other Public Peer would make a counter-offer to convert the public peering session to a private peering session.

We assume that the ISP is not already at the IX. We assume that the ISP is not remote peering (requires colocation).

We are not defining a redundant router, redundant peering port or redundant peering interface card solution. In conversations with the Peering coordinators we saw great disparity in their views regarding this. Some of the larger ISPs required great levels of redundancy so any component could fail with minimal impact on operations. Others found it more cost effective to handle redundancy by provisioning larger backbone capacity to handle the fail over to another IX, hopefully located close by. For one large European ISP, the thought of putting that much traffic on a single network component was too scary to consider. "If I privately peer and I lose a 1G card, the rerouted traffic is at worst a 1000Mbps. I lose a 10G public peering card and I have a disaster!"

We do not consider backbone costs. Several ISPs pointed out that an ISP that would peer towards 10G capacity would probably need to peer in multiple locations. This would require a broader architecture with a more complex model that would vary based on degree of redundancy but would more realistic.

We are ignoring the revenue producing potential of the 10G infrastructure. Josh Snowhorn (NOTA) pointed out that many ISPs are utilizing the same infrastructure to make money and for them the costs of peering are insignificant incremental costs.

Some have pointed out that the costs of peering should include the operations costs associated with managing peering, including Peering Coordinator salary, NOC time, documentation time, etc. Others argue that the costs are negligible, and that the staff are there anyway. Others still claim that one form of peering or the other saves significant engineering resources. We have not factored these costs into this analysis although we did highlight a few key differences between public and private peering in terms of peering administration.

Some feedback from the Peering Coordinator Community included some alternative configurations that should be considered. Eric Troyer suggested for example that switch ports are so inexpensive that it is common for ISPs to used a switch in between their router and the public peering fabric and trunk multiple gigEs together rather than step from a single gigE to a 10GigE connection to the switch. Some suggested that a Wave Division Multiplexer and switch architecture with layer three enabled would provide a less expensive (albeit less feature rich) solution that should be considered. There are many permutations that could be considered and it would be useful to take an ISP survey to identify and model the most common architectures and solutions.

The author would like to gratefully acknowledge the Peering Coordinator Community for sharing their insights, and in particular: Ren Provo (SBC), Richard Steenbergen (nLayer), James Rice (LoNAP), Todd Underwood (Renesys), Stephen Wilcox (TeleComplete), Vanessa Evans (LINX), Niels Bakker (AMS-IX), Chris Malayter (TDS Telecom), Patrick Gilmore (Akamai), Frank Orloski (T-Systems), Vijay Gill (AOL), Vish Yelsangikar (NetFlix), Nathan Hickson (eBay), Steve Feldman (CNet), Lane Patterson (Equinix), Joy Fender, Falk Bornstaedt (T-Systems), Remco Donker (MCI), Danny McPhearson (Arbor Networks), Josh Snowhorn (NOTA), Vince Fuller (Cisco), Philip Smith (Cisco), Andrew Odlyzko (UMN).

Internet Transit Pricing Historical and Projections

Index of other white papers on peering

WIlliam B. Norton is the author of The Internet Peering Playbook: Connecting to the Core of the Internet, a highly sought after public speaker, and an international recognized expert on Internet Peering. He is currently employed as the Chief Strategy Officer and VP of Business Development for IIX, a peering solutions provider. He also maintains his position as Executive Director for DrPeering.net, a leading Internet Peering portal. With over twenty years of experience in the Internet operations arena, Mr. Norton focuses his attention on sharing his knowledge with the broader community in the form of presentations, Internet white papers, and most recently, in book form.

From 1998-2008, Mr. Norton’s title was Co-Founder and Chief Technical Liaison for Equinix, a global Internet data center and colocation provider. From startup to IPO and until 2008 when the market cap for Equinix was $3.6B, Mr. Norton spent 90% of his time working closely with the peering coordinator community. As an established thought leader, his focus was on building a critical mass of carriers, ISPs and content providers. At the same time, he documented the core values that Internet Peering provides, specifically, the Peering Break-Even Point and Estimating the Value of an Internet Exchange.

To this end, he created the white paper process, identifying interesting and important Internet Peering operations topics, and documenting what he learned from the peering community. He published and presented his research white papers in over 100 international operations and research forums. These activities helped establish the relationships necessary to attract the set of Tier 1 ISPs, Tier 2 ISPs, Cable Companies, and Content Providers necessary for a healthy Internet Exchange Point ecosystem.

Mr. Norton developed the first business plan for the North American Network Operator's Group (NANOG), the Operations forum for the North American Internet. He was chair of NANOG from May 1995 to June 1998 and was elected to the first NANOG Steering Committee post-NANOG revolution.

William B. Norton received his Computer Science degree from State University of New York Potsdam in 1986 and his MBA from the Michigan Business School in 1998.

Read his monthly newsletter: http://Ask.DrPeering.net or e-mail: wbn (at) TheCoreOfTheInter (dot) net

Click here for Industry Leadership and a reference list of public speaking engagements and here for a complete list of authored documents

The Peering White Papers are based on conversations with hundreds of Peering Coordinators and have gone through a validation process involving walking through the papers with hundreds of Peering Coordinators in public and private sessions.

While the price points cited in these papers are volatile and therefore out-of-date almost immediately, the definitions, the concepts and the logic remains valid.

If you have questions or comments, or would like a walk through any of the paper, please feel free to send email to consultants at DrPeering dot net

Please provide us with feedback on this white paper. Did you find it helpful? Were there errors or suggestions? Please tell us what you think using the form below.

Contact us by calling +1.650-614-5135 or sending e-mail to info (at) DrPeering.net